Tag

Technology

Date

Read Time

9 Minutes

Content

Entropik Team

Launching ads without enough evidence is expensive. It can waste media budget, slow down optimization, and leave teams guessing whether weak performance came from the creative, the message, the offer, or the audience fit.

That is where ad testing matters. It helps marketers make better campaign decisions before and after launch by showing what is likely to resonate, what needs to change, and what should not be scaled yet.

But ad testing is often treated too narrowly. Some teams think of it only as A/B testing live ads, while others reduce it to broad creative feedback. In practice, it is wider than both.

This guide breaks down what ad testing actually includes, what parts of an ad teams can test, the most common ad testing methods, the metrics that matter, and how AI can support faster creative evaluation without turning the process into a black box.

What is ad testing?

Ad testing is the process of evaluating ads before or during a campaign to understand what is likely to work, what may need improvement, and what should change before more budget is committed.

That evaluation can happen at different points:

before launch, to reduce risk

during a campaign, to improve optimization

across iterations, to compare messages, formats, creatives, or offers over time

Importantly, ad testing is broader than one single method. It can involve:

testing the creative itself

evaluating message clarity

comparing concepts or formats

checking how audiences respond

reviewing performance signals after launch

So while creative testing is one part of ad testing, it is not the whole thing. That distinction matters because the broader the campaign question, the broader the testing approach often needs to be.

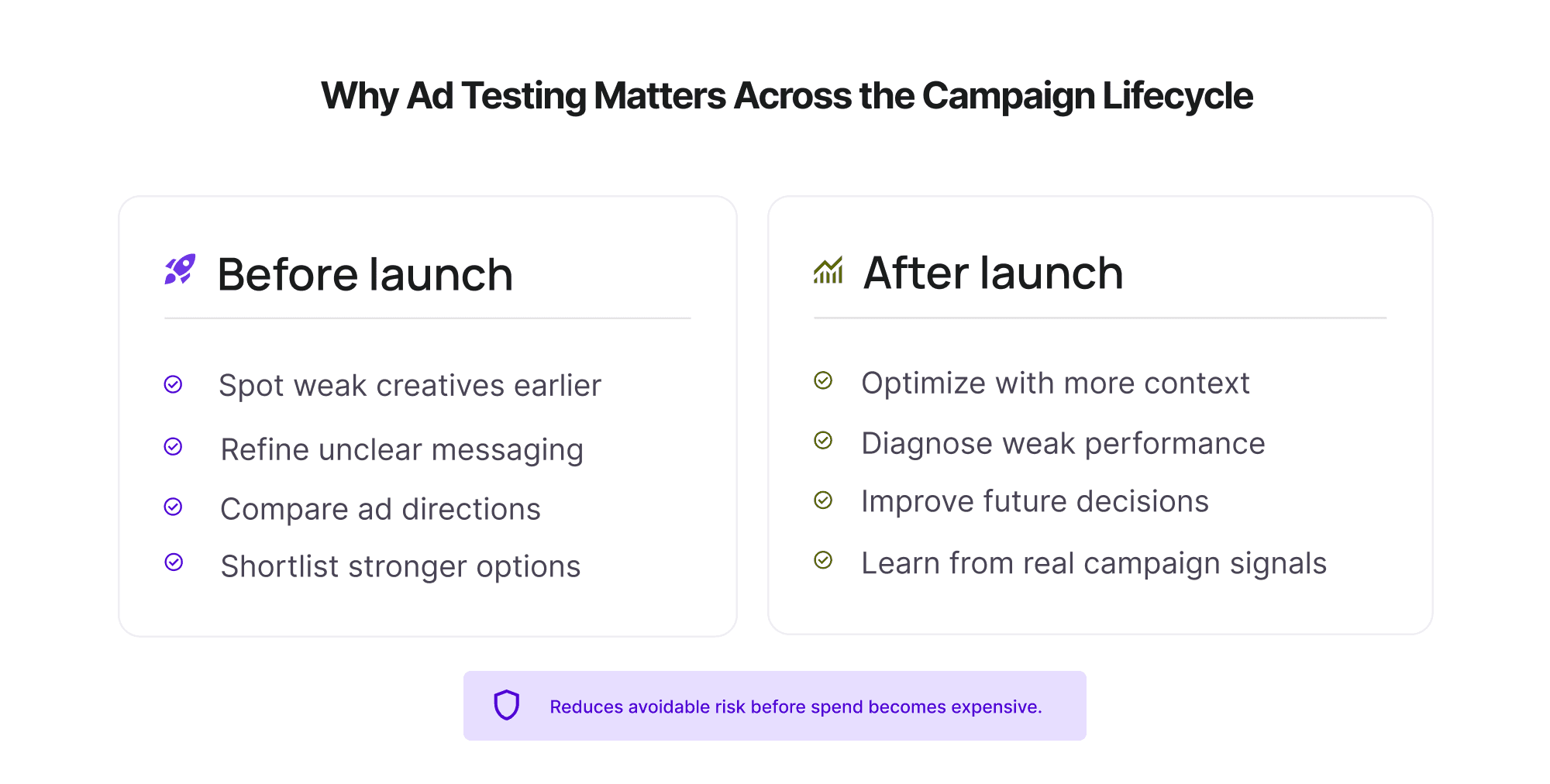

Why ad testing matters before and after launch

The main value of ad testing is not just that it produces data. It helps teams make better decisions with less unnecessary risk.

Before launch, it can help teams:

spot weak creatives earlier

refine unclear messaging

compare ad directions before scaling spend

shortlist stronger options for launch

After launch, it can help teams:

optimize with more context

understand whether weak results are tied to message, execution, audience, or offer

improve future campaign decisions using what has already been learned

This matters because many campaigns do not fail for one simple reason. An ad can miss because the message is unclear, the visual does not stand out, the CTA is weak, the offer is not compelling, or the audience is wrong. Without ad testing, teams often discover those issues only after money has already been spent.

Done well, it gives teams a clearer way to decide:

what to launch

what to refine

what to pause

what to test again

It is not about removing all uncertainty. It is about reducing avoidable uncertainty before it becomes expensive.

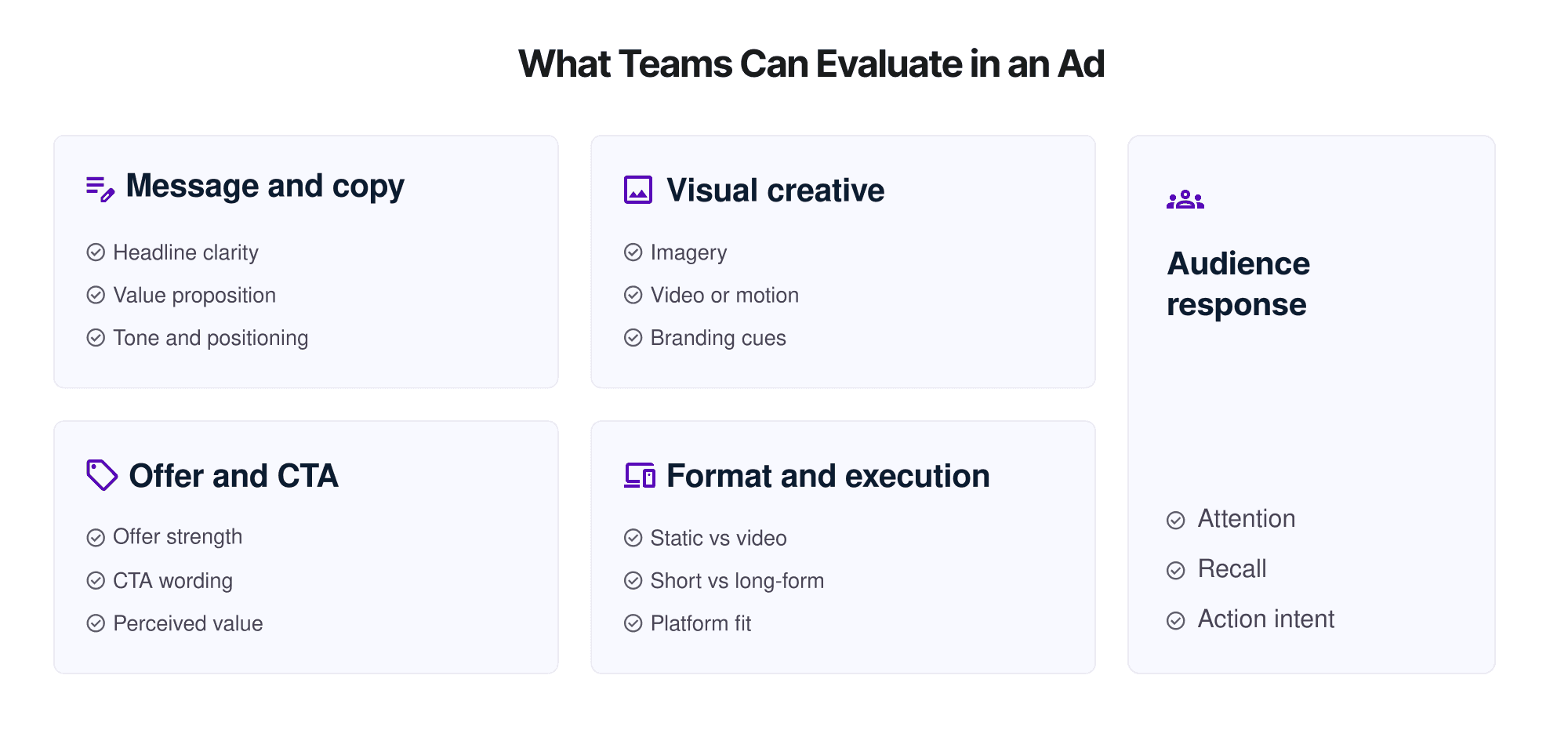

What can teams test in an ad?

One reason ad testing is broader than creative testing is that teams can evaluate more than just “the ad creative” in a narrow sense.

Message and copy

A strong visual can still underperform if the message is unclear or unconvincing. Teams can test:

headline clarity

body copy

value proposition

message hierarchy

tone and positioning

This is especially useful when campaigns are trying to communicate something new or complex.

Visual creative

This is the part most people think of first. It includes:

imagery

video or motion

design clarity

visual hierarchy

branding cues

distinctiveness

This is where ad creative testing becomes especially relevant, because visual execution can influence attention, recognition, and message absorption.

Offer and CTA

Sometimes the ad is not weak, but the offer is. Or the CTA does not give enough reason to act. Teams can test:

offer strength

urgency

CTA wording

perceived value

Format and execution

An idea that works in one format may underperform in another. Teams can compare:

static vs video

short-form vs long-form

platform-specific adaptations

mobile-first vs desktop-friendly execution

Audience response

Not every useful signal comes from clicks alone. Teams can also test for:

attention

clarity

emotional response

recall

action intent

brand linkage

This is one reason ad testing research can be more useful when it combines both pre-launch evaluation and post-launch performance signals.

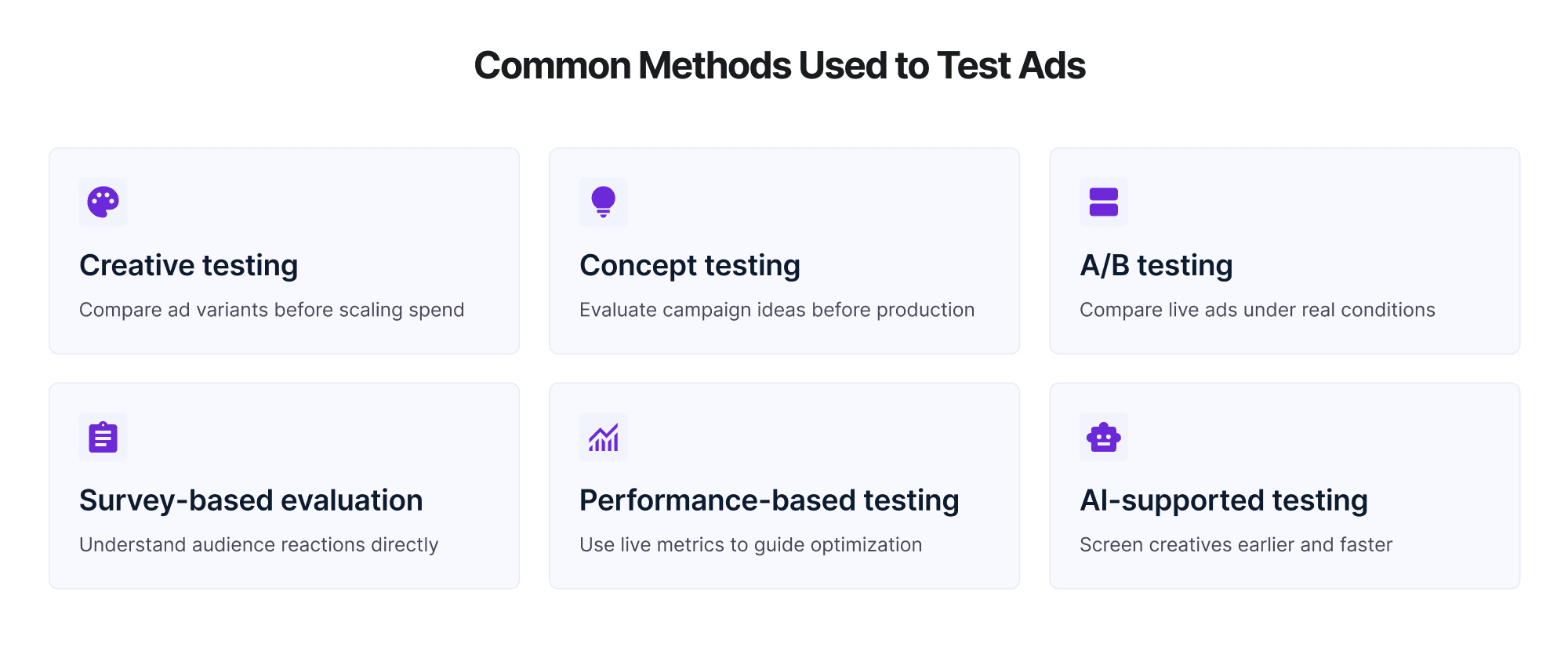

Common ad testing methods teams use

There is no single “best” method for every campaign. The right method depends on what the team is trying to decide, what stage the campaign is in, and what kind of evidence is needed.

Creative testing

Creative testing focuses on the ad itself. It helps teams evaluate which creative directions are stronger before scaling spend. This can include:

comparing multiple ad variants

assessing which visuals are clearer or more attention-grabbing

identifying which creative is more likely to resonate

This is often one of the most useful ad testing methods before launch, especially when the team is choosing between multiple assets.

Concept testing

Concept testing happens earlier. Instead of testing final ad creative, teams test the underlying idea or campaign direction before full production. This is useful when the decision is still strategic rather than executional.

Best used when:

the campaign idea is still early

production costs are high

teams want directional validation before finalizing assets

A/B testing

A/B testing compares two or more live versions of an ad to see which performs better under real campaign conditions. It is powerful, but it is not a full replacement for earlier pre testing advertising work.

Best used when:

the campaign is live

the team is optimizing one or two clear variables

the goal is performance improvement in-market

Survey-based ad evaluation

Some teams use survey or response-based approaches to understand how audiences perceive an ad. This can help assess:

message clarity

offer understanding

brand association

recall or reaction

Best used when:

the team wants direct audience feedback

the question goes beyond live performance data

the campaign goal includes brand perception, not just conversion

Performance-based testing

Performance-based testing uses live campaign signals such as:

CTR

completion rate

conversions

cost efficiency

engagement metrics

Best used when:

the campaign is already running

the team needs real-world behavior data

optimization decisions depend on in-market performance

This is valuable, but it works best when combined with broader advertising assessment, not used as the only source of truth.

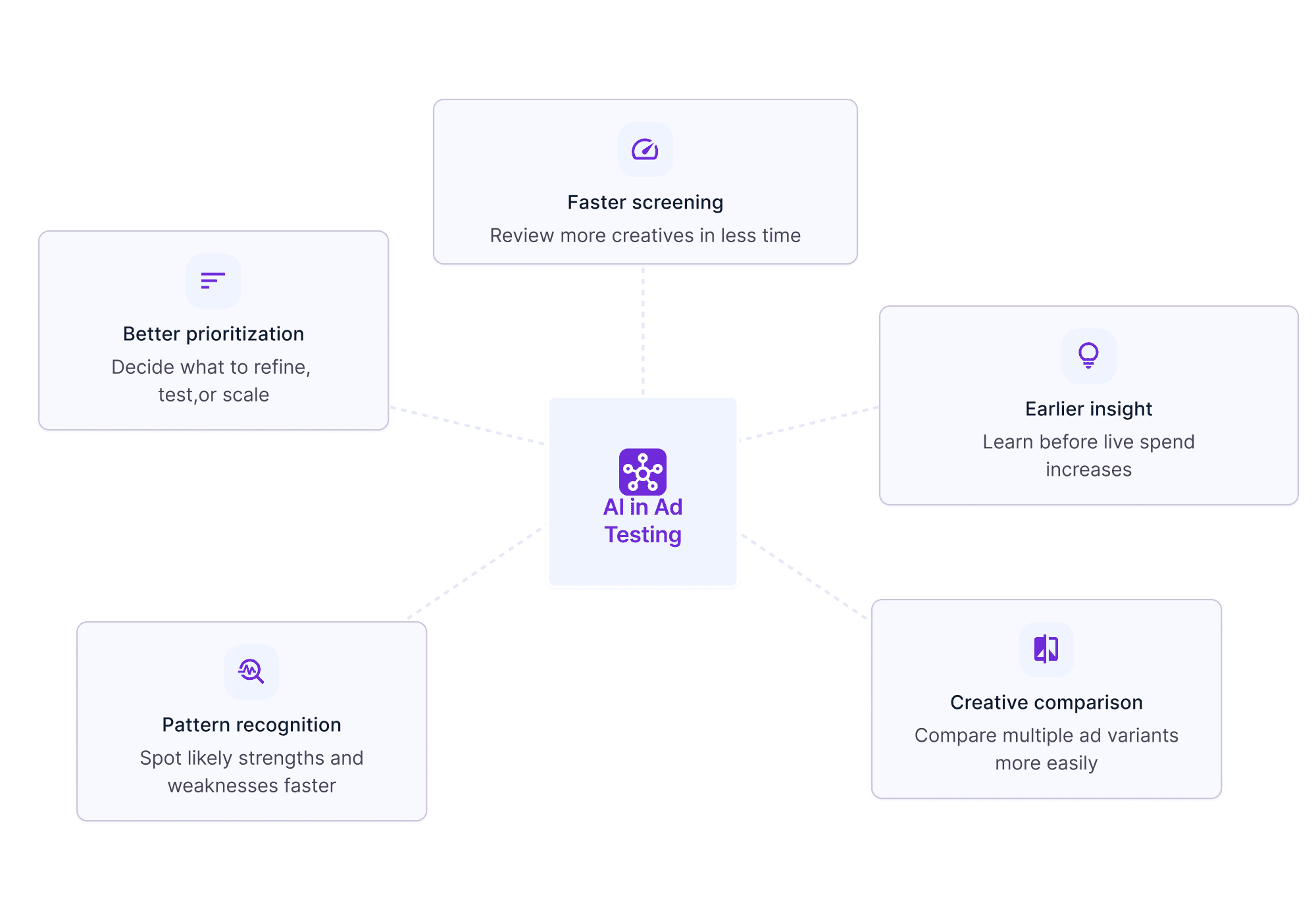

AI-supported ad testing

AI-supported testing helps teams evaluate creatives earlier and faster, especially when they need to screen multiple assets before launch. It can support:

faster creative comparison

earlier pattern recognition

screening before heavy media spend

identifying likely strengths and weaknesses in an ad

Best used when:

teams need speed

creative volume is high

pre-launch decisions need more evidence than intuition alone

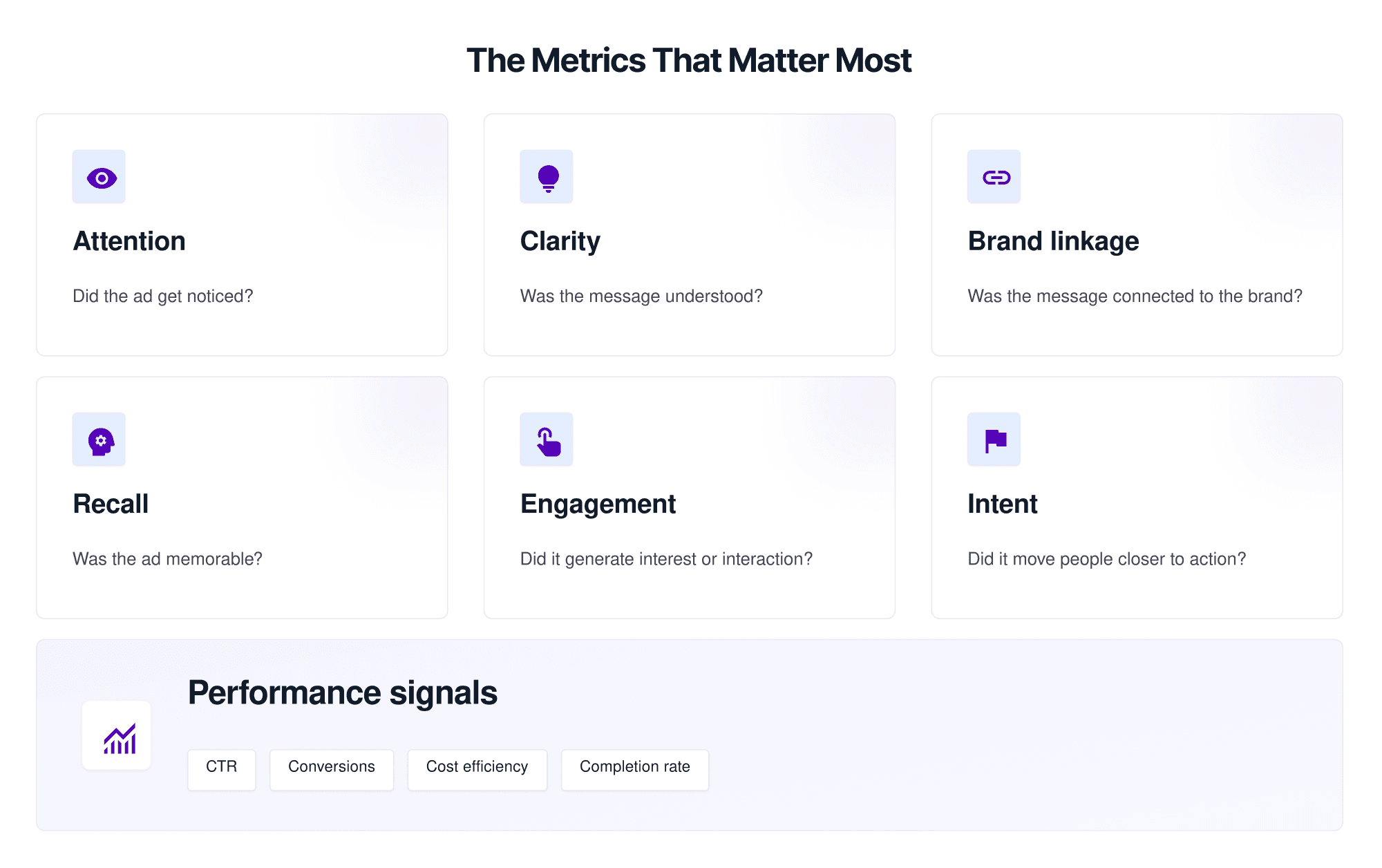

What metrics matter in ad testing?

A common mistake in evaluating ads is treating all campaigns as if they should be judged by the same metric. In reality, the right metric depends on the campaign goal.

Attention

Did the ad capture notice in the first place? If an ad fails here, the rest of the message may never get a real chance.

Clarity and message comprehension

Did people understand what the ad was trying to communicate? This matters especially when the product, offer, or message is complex.

Brand linkage

Did the audience connect the message or visual to the right brand? Strong creative can still underperform if the brand connection is weak.

Recall

Was the ad memorable enough to stick? This is especially useful in awareness and brand-building campaigns.

Engagement

Did the ad trigger interest or interaction? Depending on the platform, this may include click behavior, time spent, or social engagement signals.

Intent

Did the ad move the audience closer to action? This may include purchase intent, sign-up intent, or other next-step signals.

Performance signals

After launch, teams may also look at:

CTR

conversion rate

cost efficiency

video completion rate

landing page behavior

These are important ad testing metrics, but they should be interpreted in context. A click-focused metric is not always the best indicator for a campaign built around recall or brand lift.

A better way to think about metrics is:

awareness campaigns → attention, recall, brand linkage

consideration campaigns → clarity, engagement, intent

conversion campaigns → action signals, offer response, cost efficiency

Best practices for better ad testing

This is where ad testing becomes truly useful.

Start with one clear decision

Before choosing a method, decide what you are trying to answer. For example:

Which creative should we launch?

Is the message clear enough?

Which CTA performs better?

Should we refine this concept or drop it?

The clearer the decision, the better the testing setup.

Test the right variable

A weak setup often tests too many things at once. If the headline, image, offer, and CTA all change together, the result may be harder to interpret. Good ad testing techniques are focused enough to produce usable findings.

Match the method to the campaign stage

Different stages call for different methods:

early concept stage → concept testing

pre-launch ad selection → creative testing

live campaign improvement → A/B or performance-based testing

This is where many teams improve results simply by choosing the right approach earlier.

Define success before testing

Do not wait until results arrive to decide what counts as good. Define what success looks like before the test begins.

Combine pre-launch and post-launch learning

Pre-launch testing helps reduce risk. Post-launch data helps confirm or refine real-world performance. Strong teams use both.

Interpret results in context

A weak result does not always mean the ad itself is bad. It may reflect the wrong audience, wrong format, wrong metric, or wrong stage of evaluation. This is why market testing for ads works better when findings are tied back to campaign goals, not read in isolation.

Common mistakes teams make with ad testing

Even teams that test regularly can still make avoidable mistakes.

Testing too late

If the first serious learning happens only after media spend increases, optimization becomes more expensive than it needed to be.

Testing too many variables at once

This makes results harder to interpret and decisions harder to defend.

Relying only on post-launch performance

Live campaign data is important, but it should not be the only place teams learn. Sometimes the smarter move is to evaluate likely issues before the campaign scales.

Treating ad testing and creative testing as the same thing

This narrows the scope of learning. Creative testing is important, but ad testing includes more than just comparing assets.

Using the wrong metric for the goal

An awareness campaign should not be judged only by CTR. A conversion campaign should not rely only on soft engagement signals.

Drawing conclusions from weak or noisy signals

A small result difference is not always a meaningful one. Good ad testing requires judgment, not just numbers.

How AI can improve ad testing

AI is becoming more useful in this workflow because it helps teams evaluate ad quality earlier.

Used well, it can support:

faster creative screening

earlier insight before live spend

easier comparison across multiple ad variants

stronger pattern recognition around attention, clarity, or likely effectiveness

This is where AI creative testing becomes practically relevant. Instead of waiting for campaign data alone, teams can evaluate which creatives appear stronger before launch and use that insight to refine or prioritize assets.

That does not mean AI should replace all other methods. It should support better and earlier decision-making. In most cases, the strongest workflow still combines:

pre-launch evaluation

creative judgment

campaign context

post-launch performance data

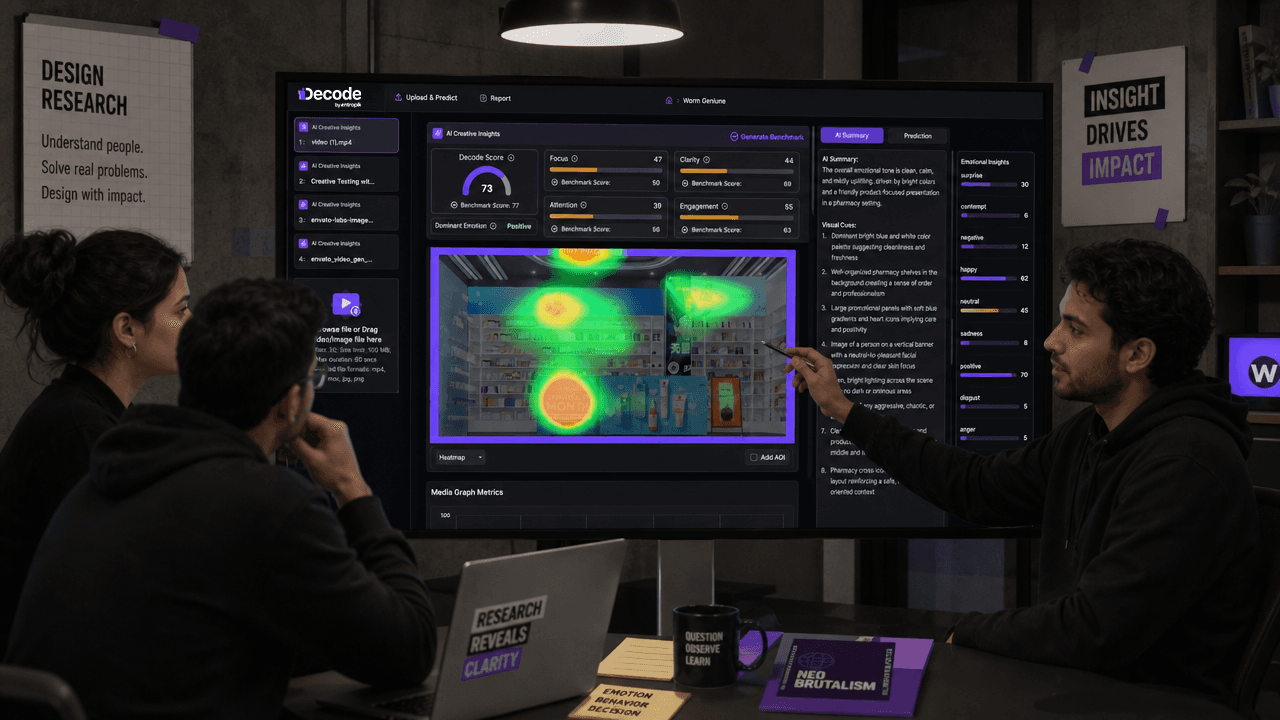

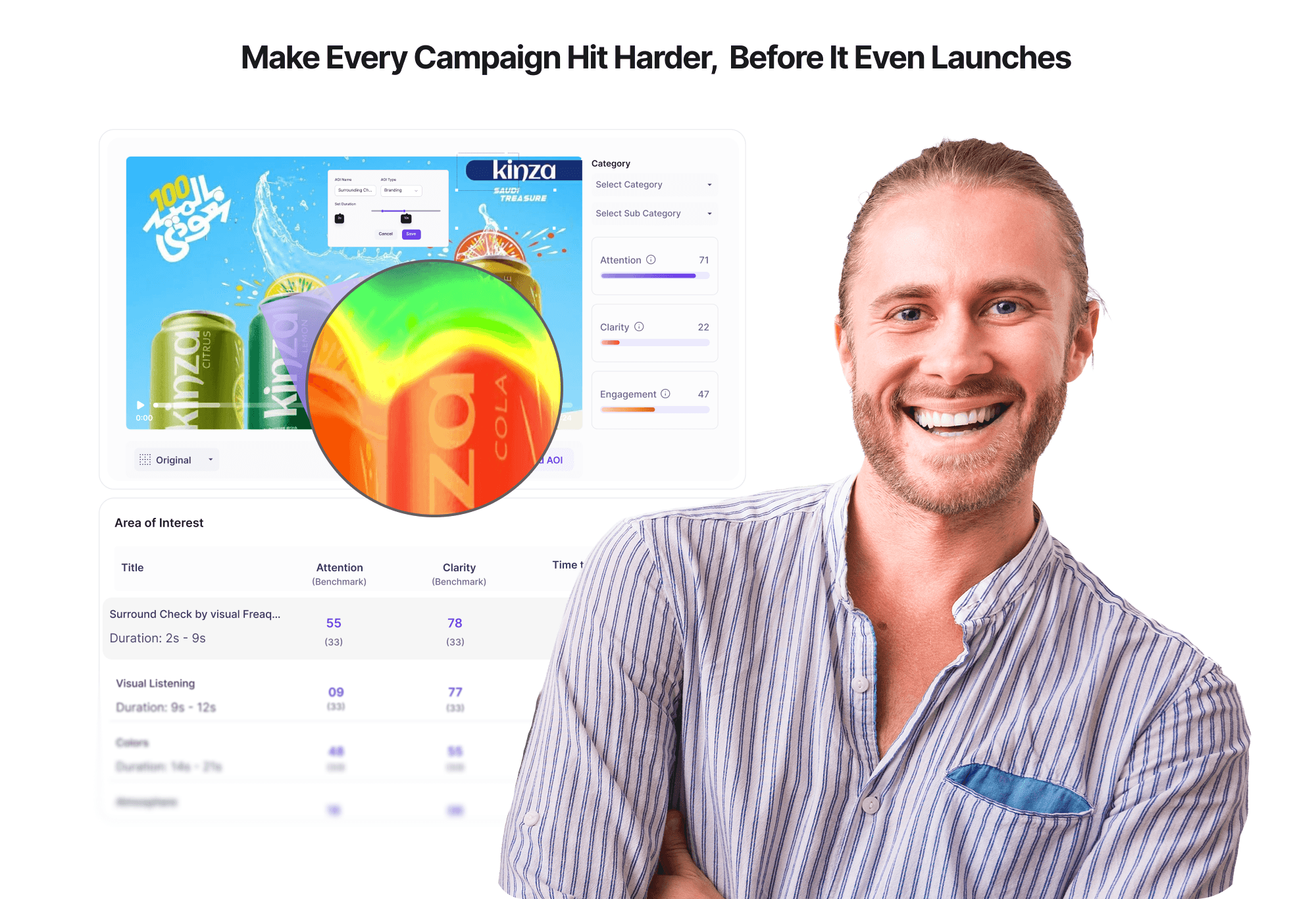

Where AI Creative Insights fits into the workflow

For teams trying to improve ad testing without turning it into a slow or expensive process, AI-supported creative evaluation can be especially useful before launch.

This is where Decode by Entropik, through AI Creative Insights, can fit into the workflow. Rather than acting as a replacement for all ad testing, it can help teams:

assess creative performance earlier

identify likely strengths and weaknesses in ad assets

understand which creatives may deserve deeper testing or prioritization

support faster decisions before media spend scales

That makes it useful as part of a broader ad performance testing process, especially when teams are managing multiple ad variants and need a faster way to narrow the field.

The important point is not “AI instead of testing.” It is “AI as part of a smarter ad testing workflow.”

Final thoughts

Ad testing is broader than one method, one metric, or one campaign stage.

It includes pre-launch learning, post-launch optimization, creative evaluation, performance review, and better decision-making across the campaign lifecycle. Creative testing is one important part of that process, but it should sit inside a broader ad testing strategy rather than stand in for it.

The strongest teams usually do three things well:

they test with a clear decision in mind

they match the method to the question

they use both early evaluation and real-world campaign signals

That is what makes ad testing useful in practice. Not testing for the sake of testing, but testing in a way that leads to better campaign decisions.

FAQs

What is ad testing?

Ad testing is the process of evaluating ads before or during a campaign to understand what is likely to work, what needs improvement, and what changes should be made before more budget is committed.

Why is ad testing important?

Ad testing helps reduce guesswork, improve campaign decisions, identify weak ads earlier, and strengthen both pre-launch and post-launch optimization.

What is the difference between ad testing and creative testing?

Ad testing is the broader process of evaluating ads across methods, metrics, and campaign stages. Creative testing is one part of ad testing that focuses specifically on the ad creative itself.

What should teams test in an ad?

Teams can test message, visuals, CTA, offer, format, audience response, attention, clarity, and performance-related signals depending on the campaign goal.

What metrics matter in ad testing?

The most useful metrics depend on the objective, but common ones include attention, clarity, recall, engagement, intent, brand linkage, and post-launch performance indicators.

How can AI improve ad testing?

AI can help teams evaluate creatives earlier, compare assets faster, identify likely strengths or weaknesses, and support better pre-launch decisions before spend scales.

See how teams can test ads earlier and make more confident campaign decisions.