Tag

Research

Date

Read Time

7 Minutes

Content

Entropik Team

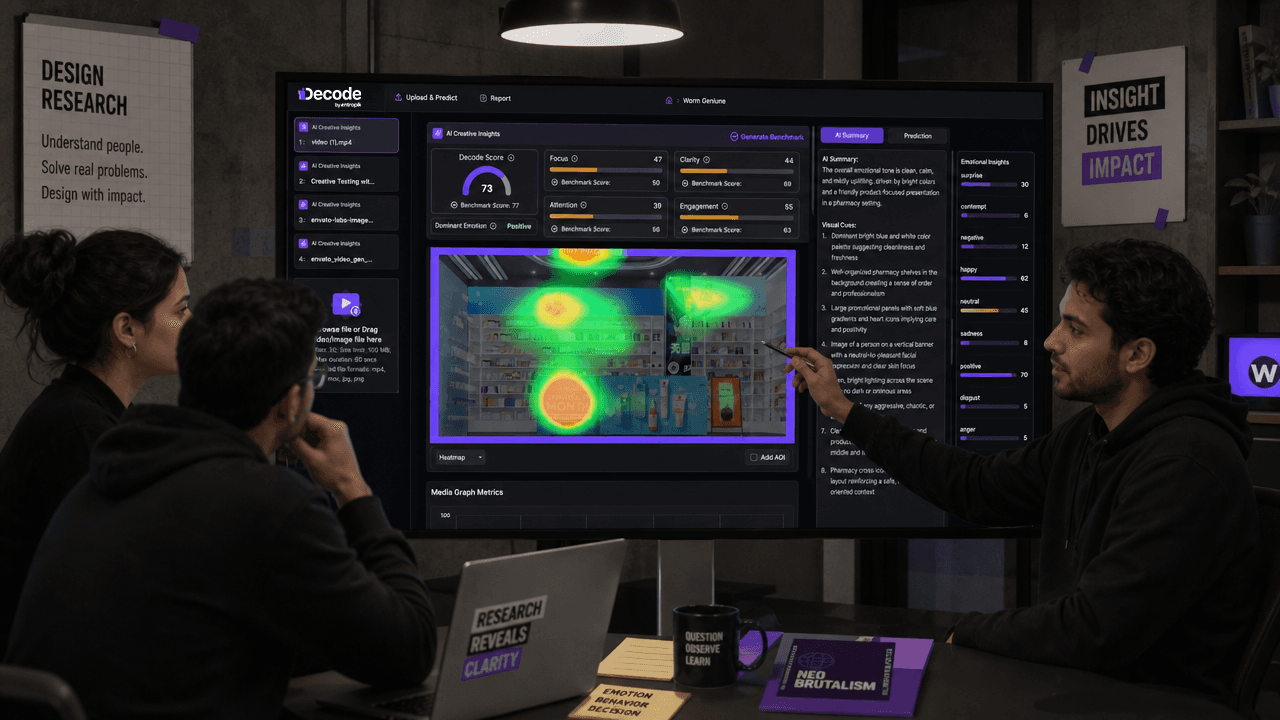

AI is becoming part of how many teams plan, run, and analyze research. It can help reduce repetitive work, speed up synthesis, and make it easier to collect input at scale. That growing adoption has made AI in UX research an important topic for teams trying to learn faster without lowering the quality of their decisions.

But the most useful question is not whether AI can be used in UX research.

It is where it fits best.

That is especially true for AI moderated research. In the right situations, it can help teams run lightweight studies faster, gather broader qualitative input, and keep research moving between larger projects. In the wrong situations, it can create the appearance of speed while missing the nuance, trust, or context that human moderation brings.

So the real opportunity is not to use AI everywhere. It is to understand where AI-supported methods are genuinely useful, where they need human oversight, and where human-led research is still the better choice.

This guide looks at where AI fits in UX research workflows, where AI moderated research works best, where it falls short, and how teams can use it more thoughtfully.

What does AI in UX research actually include?

When people talk about AI in UX research, they are usually talking about more than one capability. AI can support several parts of the research workflow, not just one method. This broader AI UX research conversation includes everything from planning support to moderation and synthesis.

Depending on the team and the use case, it may help with:

drafting research plans

organizing discussion guides

moderating structured interviews or follow-up questions

summarizing transcripts

clustering themes across responses

supporting analysis

turning findings into shareable reports

In that broader landscape, AI moderated research is one specific use case. It is not the full story of AI in research, and it should not be treated as a replacement for every kind of human-led study.

That distinction matters. Once teams treat AI moderation as one method within a larger workflow, it becomes easier to decide when it is useful and when it is not.

Where AI Moderated Research Fits Best in UX Research

One of the biggest mistakes teams make is thinking about AI moderation as a direct substitute for traditional interviews. In practice, it is more useful to think of AI moderated research as a method that fits certain research needs better than others. It tends to work best when the objective is clear, the study is reasonably scoped, and the team needs faster or broader input without losing basic structure.

This is why AI in UX research is not just about whether AI can be used, but where it fits best across the workflow. In many cases, AI moderation is especially useful where speed, repeatability, and scale matter more than improvisation and depth.

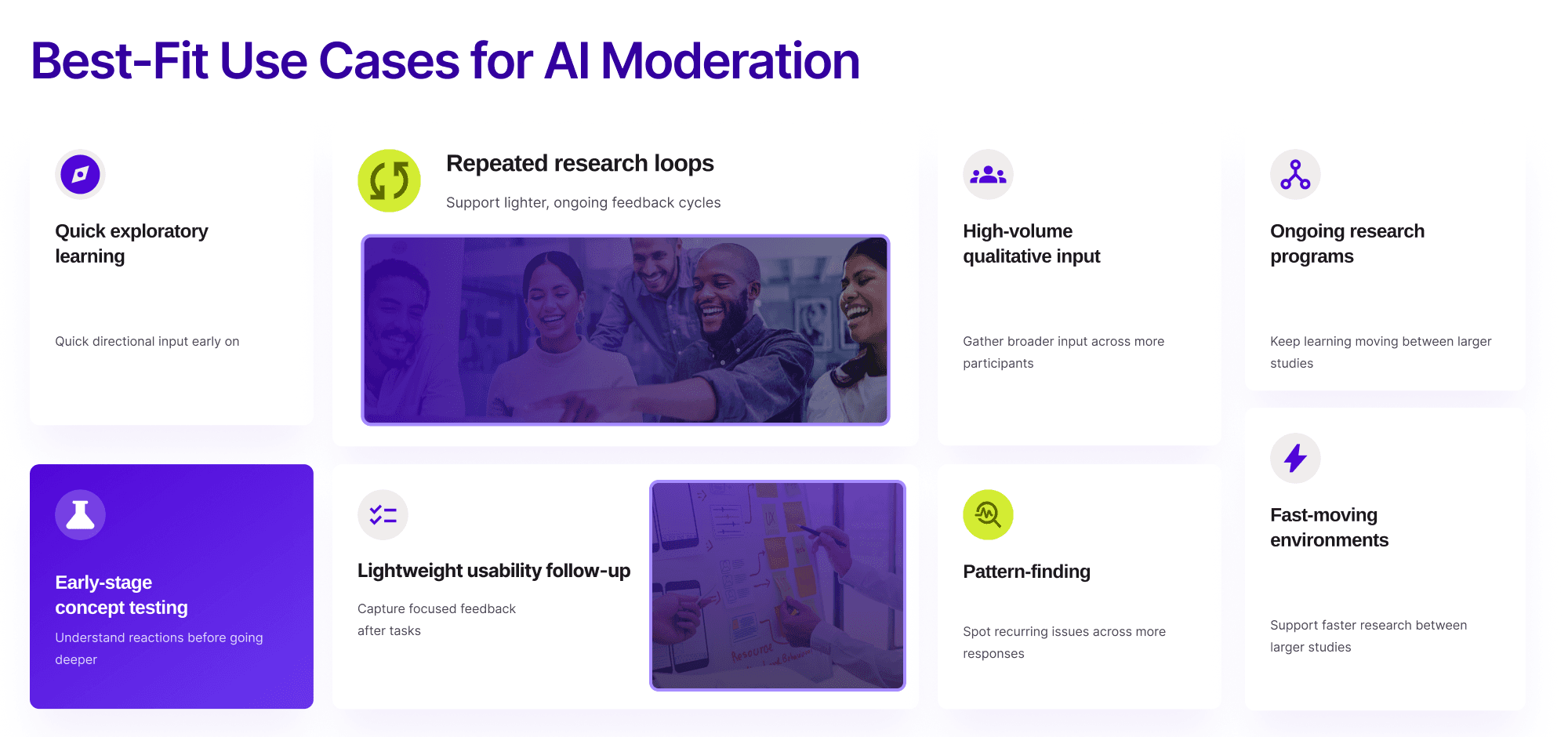

Here are some of the situations where AI moderated research often works well:

Quick exploratory learning

At the start of a project, teams often need quick directional input. They may want to understand initial reactions, surface recurring pain points, or identify questions worth exploring further. In those cases, AI moderation can help collect useful early signals without the overhead of a fully manual study.

Repeated research loops

Some teams do not run one-off research alone. They need ongoing feedback as concepts evolve, prototypes improve, or product flows change. AI moderated research can support these lighter, more repeatable loops.

High-volume qualitative input

Sometimes the goal is not one deep conversation with a few users, but a broader set of qualitative responses across more participants. This is one reason teams exploring AI qualitative research are paying closer attention to AI-supported workflows.

Early-stage concept testing

In early concept work, teams often want to understand whether an idea is clear, relevant, or confusing before investing in deeper rounds of research. AI-supported studies can help collect that first layer of feedback faster.

Lightweight usability follow-up

For focused usability questions, especially after a task or interaction, AI moderation can help gather follow-up input at scale. This tends to work best when the questions are clear and the learning goal is well defined.

Pattern-finding across many responses

One practical strength of AI user research workflows is that they can help teams collect and organize input from more participants than a small team could usually moderate manually. That can be helpful when the goal is to look for recurring problems, reactions, or themes.

Ongoing or distributed research programs

Teams building an always-on research motion may use AI to keep collecting user input between larger studies. In that setup, AI in user research becomes less about replacing researchers and more about supporting continuity.

Fast-moving product environments

Some teams work in short cycles and need insight quickly enough to shape near-term decisions. In those situations, AI moderation can support a lighter research cadence between larger human-led studies.

The value here comes from fit. AI moderated research is generally strongest when it supports fast, structured learning rather than trying to replicate every strength of human-led research. That broader AI UX research shift is most useful when teams treat AI as support for the workflow, not as a replacement for researcher judgment.

When teams should not rely on AI moderated research alone

AI-supported methods can be useful, but they should not become the default for every study. Some kinds of research depend too heavily on human judgment, trust-building, and real-time interpretation.

Sensitive or emotional topics

If the study touches on frustration, identity, stress, trust, or vulnerable experiences, human moderation is often the safer and stronger choice. Skilled researchers can adjust tone, respond with empathy, and explore sensitive moments with more care.

Deeply nuanced behavior

Some user behavior is hard to understand without noticing hesitation, contradiction, or context that sits beneath the spoken answer. These studies often need stronger human interpretation during the conversation itself.

Research that depends on rapport

Not every participant opens up right away. Some conversations become valuable only after trust has been built. In those cases, human presence can meaningfully improve the quality of the research.

High-context or high-stakes conversations

Research with expert users, senior stakeholders, or strategically important customer groups often benefits from more flexible and context-aware moderation than structured AI-led flows can provide.

This is where good method choice matters most. The question is not just whether AI can run a study. It is whether it is the right method for that study.

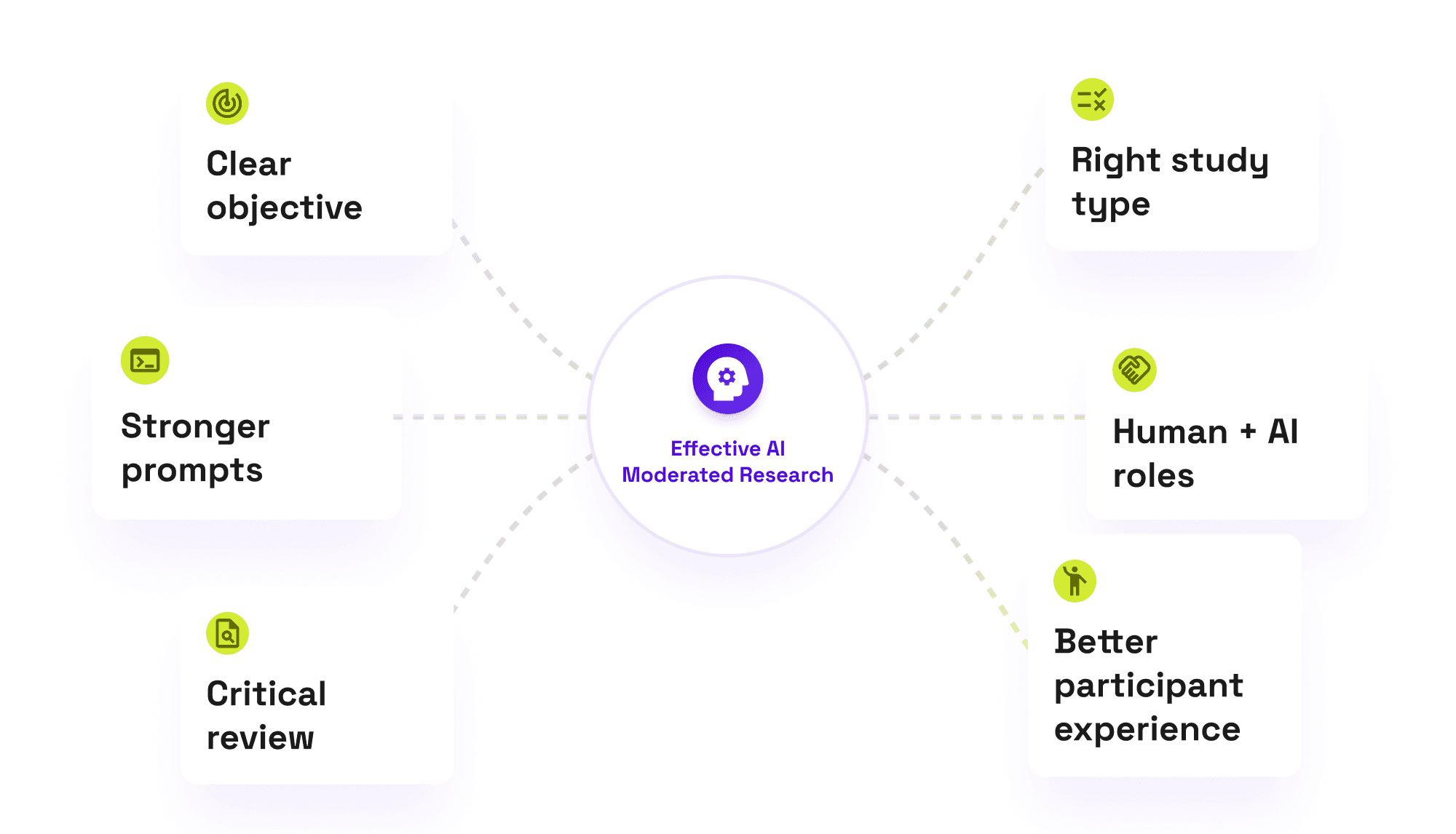

How teams can use AI moderated research more effectively

The success of AI moderated research depends heavily on study design. Teams usually get more value when they focus less on novelty and more on clarity.

Start with a clear research objective. AI is not a shortcut around fuzzy thinking. If the team is unclear about what it wants to learn, faster moderation will not solve that problem.

Choose the right study type. AI-supported moderation tends to work better for structured, scoped studies than for open-ended, highly sensitive, or deeply exploratory conversations.

Write stronger prompts and discussion guides. Even when AI is helping lead a study, the quality of the research still depends on the quality of the questions. Clear prompts, good sequencing, and thoughtful follow-ups matter.

Be deliberate about where AI should lead and where humans should step in. Some teams get better results from hybrid setups, where AI supports collection or early synthesis while researchers stay closely involved in design, interpretation, or follow-up.

Review outputs critically. AI-generated summaries and themes can be useful, but they should not be treated as unquestioned truth. Researchers still need to validate, interpret, and connect findings to the product and business context.

Keep the participant experience in mind. A method that works operationally but feels confusing or impersonal can still weaken the quality of the study.

Teams should also watch for common mistakes. One is choosing AI moderation for the wrong kind of study. Another is assuming AI summaries are final answers rather than starting points. Some teams also overestimate what AI can capture in nuanced conversations, or underestimate how much good guide design still matters.

Used well, AI applications in UX research can reduce friction in the workflow. Used carelessly, they can simply accelerate weak research design.

Final thoughts

AI is changing how many teams approach research, but the real opportunity is not about using AI everywhere. It is about using it well.

That is especially true for AI in UX research. AI can support planning, moderation, synthesis, and ongoing learning. But not every research question should be approached in the same way, and not every study benefits from AI moderation.

AI moderated research is most useful when the goal is structured, the scope is clear, and the team needs faster or broader learning. It is less useful when the work depends heavily on empathy, trust, subtle interpretation, or high-context judgment.

For most teams, the strongest approach will be a hybrid one. AI can reduce repetitive effort and support scale, while researchers still shape the question, interpret nuance, and decide what the findings actually mean.

If your team is exploring using AI for UX research, that is the right mindset to start with: not “Where can we automate?” but “Where does this method genuinely help us learn better?”

FAQs

What is AI in UX research?

AI in UX research refers to using AI to support different parts of the research process, such as planning, moderation, synthesis, analysis, and reporting.

What is AI moderated research?

AI moderated research is a method where AI helps guide participant interactions in a structured study, often through prompts, follow-up questions, or moderated qualitative workflows.

When should teams use AI moderated research?

Teams should consider AI moderated research when they need quick exploratory input, repeated feedback loops, lightweight usability follow-up, or broader qualitative coverage with a clearly defined goal.

Can AI replace UX researchers?

No. AI can support parts of the workflow, but researchers still play a central role in study design, interpretation, participant care, and decision-making.

What are the limitations of AI in UX research?

AI can be less effective in highly sensitive, nuanced, trust-based, or high-context research scenarios where human moderation and judgment matter more.

How do teams use AI in UX research workflows?

Teams often use AI to support research planning, guide creation, moderation for scoped studies, synthesis, and reporting. In many cases, the most effective approach combines AI support with human oversight.

See how teams can run AI-supported research more efficiently and turn findings into clearer product decisions.