Tag

Technology

Date

Read Time

7 Minutes

Content

Entropik Team

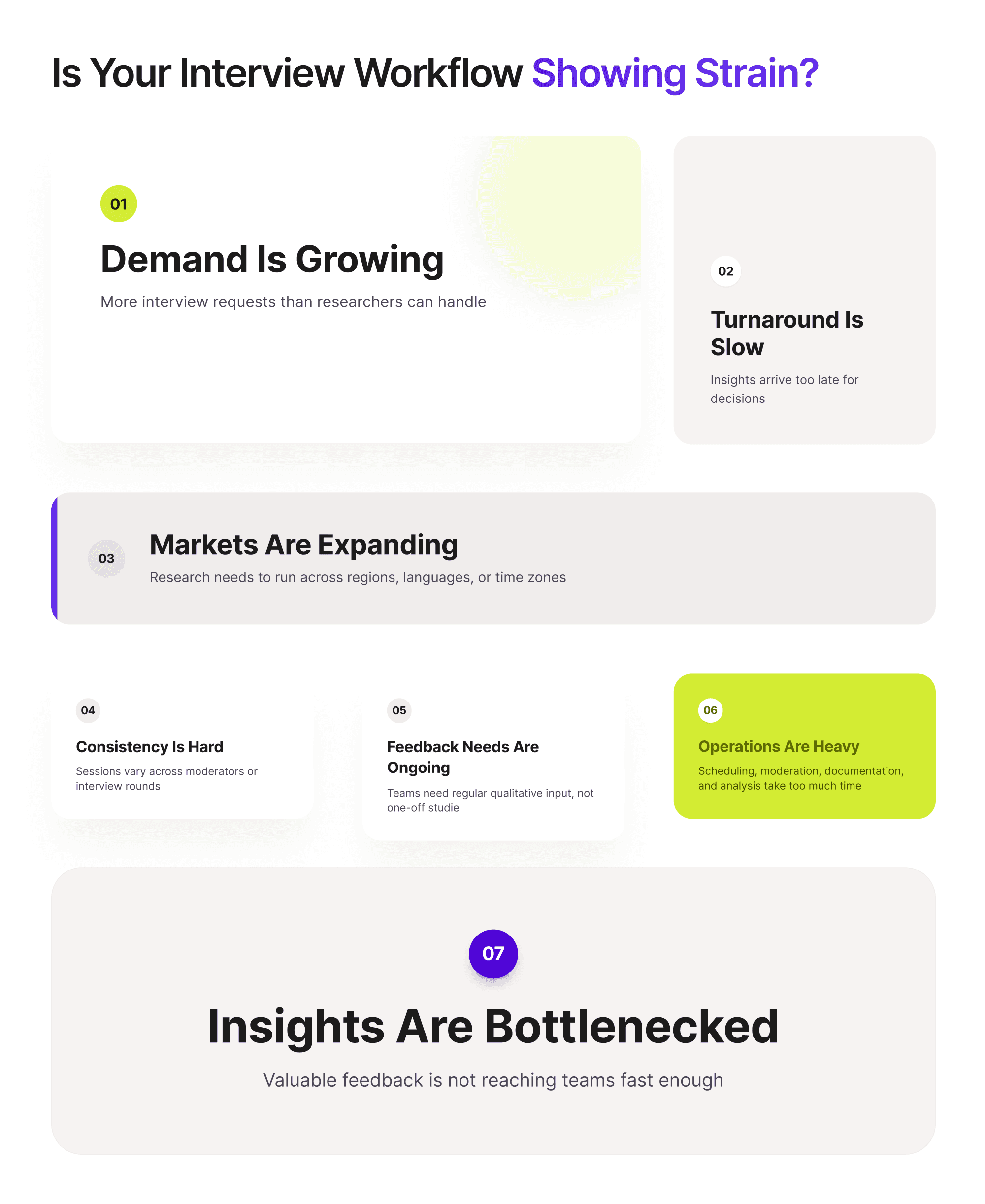

Why Teams Start Evaluating a New Approach

Most teams do not suddenly decide they need AI moderated interviews. The shift usually starts when their current research workflow begins to show signs of strain.

Interviews are taking too long to run. Moderator capacity is becoming a constraint. Research requests are piling up faster than the team can execute them. Studies need to run across more markets, more participants, or tighter timelines than before. What once felt manageable starts to feel slow, expensive, and difficult to scale.

That is often when teams begin exploring new qualitative research tools.

This does not mean every team needs AI moderation. It also does not mean traditional approaches have stopped working. But there are clear situations where AI moderated interviews can become a practical option worth evaluating.

This guide is designed to help with that decision. If your team is trying to figure out whether its current workflow has outgrown traditional qualitative research interviews, the goal is not to push a one-size-fits-all answer. It is to help you assess whether AI moderation fits the kind of research you need to do.

Why Teams Start Looking for New Qualitative Research Tools

Teams usually start evaluating new qualitative research tools when one or more core parts of their workflow begin to slow them down.

In smaller studies, traditional qualitative research interviews often work well. A skilled moderator can go deep, adapt in the moment, and surface rich nuance. But as demand grows, the same workflow can become harder to sustain.

Common pressures include:

more interview requests from product, UX, marketing, or insights teams

limited moderator bandwidth

the need to hear from more participants

faster business timelines

expanding research across countries or languages

repeated manual effort in recruiting, moderation, and analysis

At that point, the question is no longer whether qualitative research is useful. It is whether the current way of running it is still practical.

What AI Moderated Interviews Actually Solve

AI moderated interviews do not solve every research problem. But they do solve a particular type of workflow challenge.

They are most useful when teams want richer feedback than a survey can provide, but cannot always rely on live human moderation for every interview.

Instead of requiring a human researcher to guide each session, AI can help by:

asking structured questions

adapting follow-ups based on participant responses

supporting interviews at greater scale

reducing dependency on moderator availability

helping teams collect feedback across markets and time zones

In other words, moderated interviews led by AI are often less about replacing all human-led research and more about making certain kinds of qualitative work more practical.

If your biggest challenge is depth in a highly sensitive conversation, AI may not be the answer. But if your challenge is volume, consistency, or speed, AI moderation may be worth evaluating.

7 Signs Your Team May Need AI Moderated Interviews

1. Interview demand is outgrowing moderator capacity

If teams across the business want more interviews than your researchers can realistically run, review, and synthesize, the workflow will start to bottleneck. Even strong research teams can only moderate so many sessions in a given week.

When moderation capacity becomes the limiting factor, AI moderated interviews may help expand what the team can handle.

2. Research turnaround is too slow

Sometimes the issue is not the number of interviews. It is the time required to get answers.

If it takes too long to schedule interviews, conduct them, and move findings back to decision-makers, research can lose relevance. Product and marketing teams may move on before the insights are ready.

When speed becomes critical, AI moderation can help reduce delays tied to scheduling and availability.

3. Studies need to run across markets or languages

Global research creates coordination complexity quickly.

If your team needs to gather feedback across multiple regions, languages, or time zones, traditional interviewing can become much more operationally heavy. This is where AI can be especially useful as part of a broader research automation approach.

The challenge is not just collecting feedback. It is doing so in a way that stays manageable as scope expands.

4. Interview consistency is becoming a problem

Human moderators bring valuable judgment, but variation across sessions is real. Different moderators may probe differently, emphasize different areas, or shape the tone of interviews in slightly different ways.

That is not always a problem. But when comparability across interviews matters, more standardized moderation can be useful.

If consistency has become an issue, AI moderation may offer a more structured approach.

5. You need more frequent qualitative input, not just one-off studies

Some teams no longer want qualitative research only for major projects. They want a more ongoing stream of feedback.

If your organization needs regular pulses of customer, user, or audience insight, a workflow built only around manually scheduled interviews may become too heavy. This is where conversational AI research starts becoming more relevant.

AI moderation can make repeated research more feasible by lowering the operational burden of running interviews over and over again.

6. Manual moderation is slowing down research operations

Some research teams are not blocked by demand alone. They are blocked by the amount of manual effort needed to keep work moving.

Scheduling, live moderation, documentation, and analysis all add up. If too much time is being spent on repeat operational work, it may be time to evaluate whether a more scalable method would help.

This is not just a moderation issue. It is a workflow issue.

7. The value of qualitative feedback is limited by bottlenecks

Sometimes teams are already collecting meaningful feedback, but not fast enough or broadly enough to influence decisions at the right time.

In those cases, the problem is not that qualitative research is weak. It is that the current process is preventing the business from getting the full value from it.

If that sounds familiar, AI moderation may be worth evaluating as a way to reduce friction between research demand and research output.

When AI Moderated Interviews Are Probably Not the Right Fit

A practical evaluation guide should also be clear about when AI is not the best answer.

There are still situations where human moderators are likely the better choice, especially when the research depends heavily on:

sensitive or emotionally delicate topics

live trust-building

complex stakeholder dynamics

highly layered exploratory conversations

deep interpersonal judgment in the moment

In these contexts, a human moderator can often respond more carefully and flexibly than an AI-led system.

So this is not a question of whether AI is always better. It is a question of fit. Teams should evaluate the method against the research goal, not just the promise of greater efficiency.

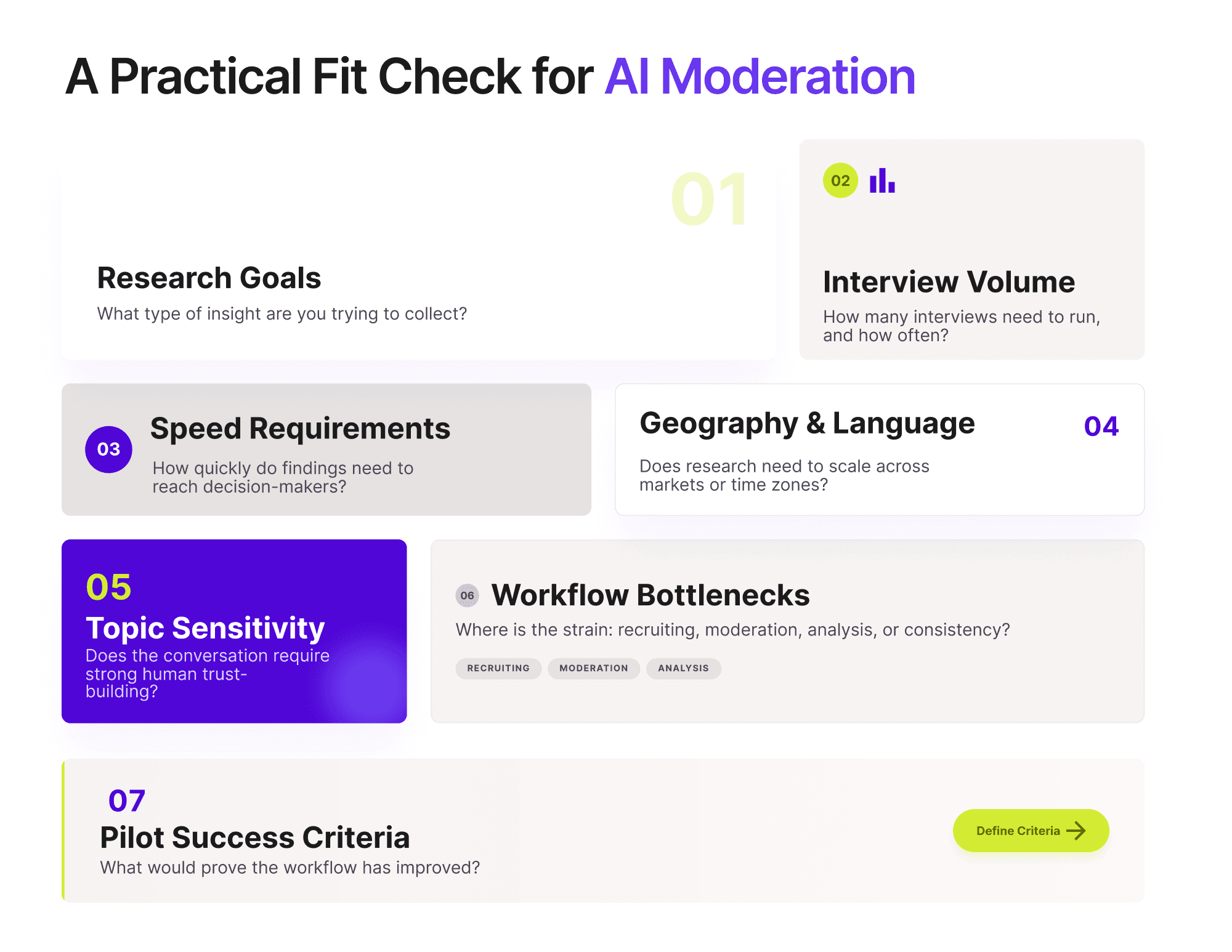

How to Evaluate Fit Before Adopting AI Moderator

If your team is seriously considering whether this approach makes sense, a useful evaluation should look at several factors.

Research goals

What type of insight are you trying to collect? Are you looking for broad directional input, recurring feedback, early concept reactions, or highly sensitive exploratory depth?

Interview volume

How many interviews do you need to run, and how often? Scale matters a lot in determining whether AI moderation will create enough value.

Speed requirements

How quickly do findings need to get back into product, UX, brand, or business decisions?

Geography and language

If research needs to run across multiple markets, language support and coordination effort become part of the evaluation.

Sensitivity of topics

Not every topic is equally suited to AI-led moderation. Some types of conversations still require stronger human presence.

Workflow bottlenecks

Where is the actual strain today: recruitment, moderation, throughput, analysis, or consistency?

Pilot success criteria

Before adopting any new approach, teams should define what success would look like.

This is where the broader landscape of qualitative research tools matters. The right choice is not simply the most innovative option. It is the one that best fits the team’s real constraints and goals.

If your evaluation includes scale, repeatability, and operational efficiency, AI moderated user interviews may be worth testing in a structured pilot.

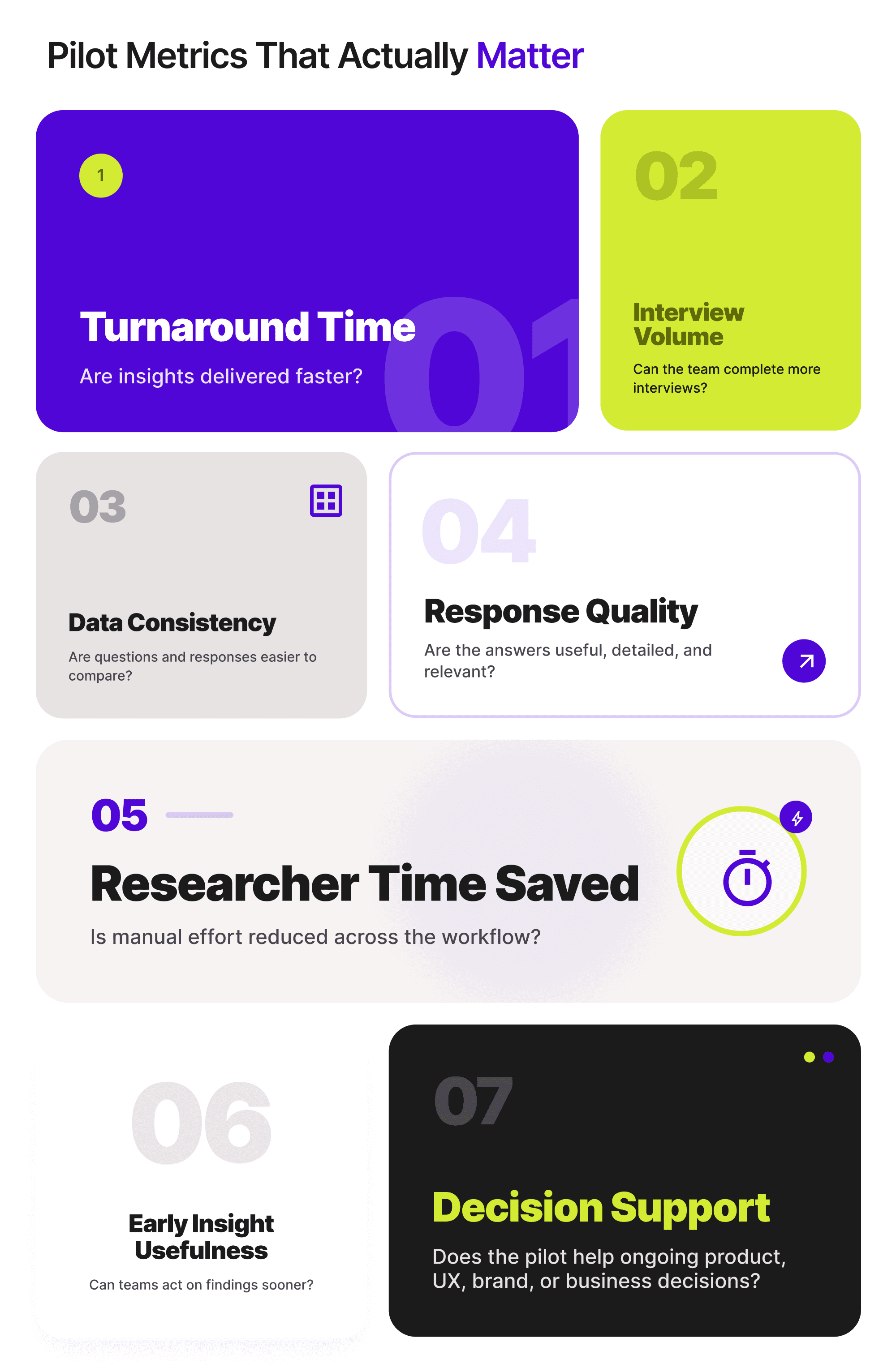

What a Good Pilot Should Measure

A good pilot should not only ask whether the tool works. It should ask whether the workflow improves in meaningful ways.

Useful pilot metrics may include:

turnaround time

interview completion volume

consistency of data collection

quality and usability of responses

researcher time saved

usefulness of early insights

ability to support ongoing decision-making

The point is not to prove that AI should replace all traditional methods. The point is to determine whether it meaningfully improves the workflow where your current process is under strain.

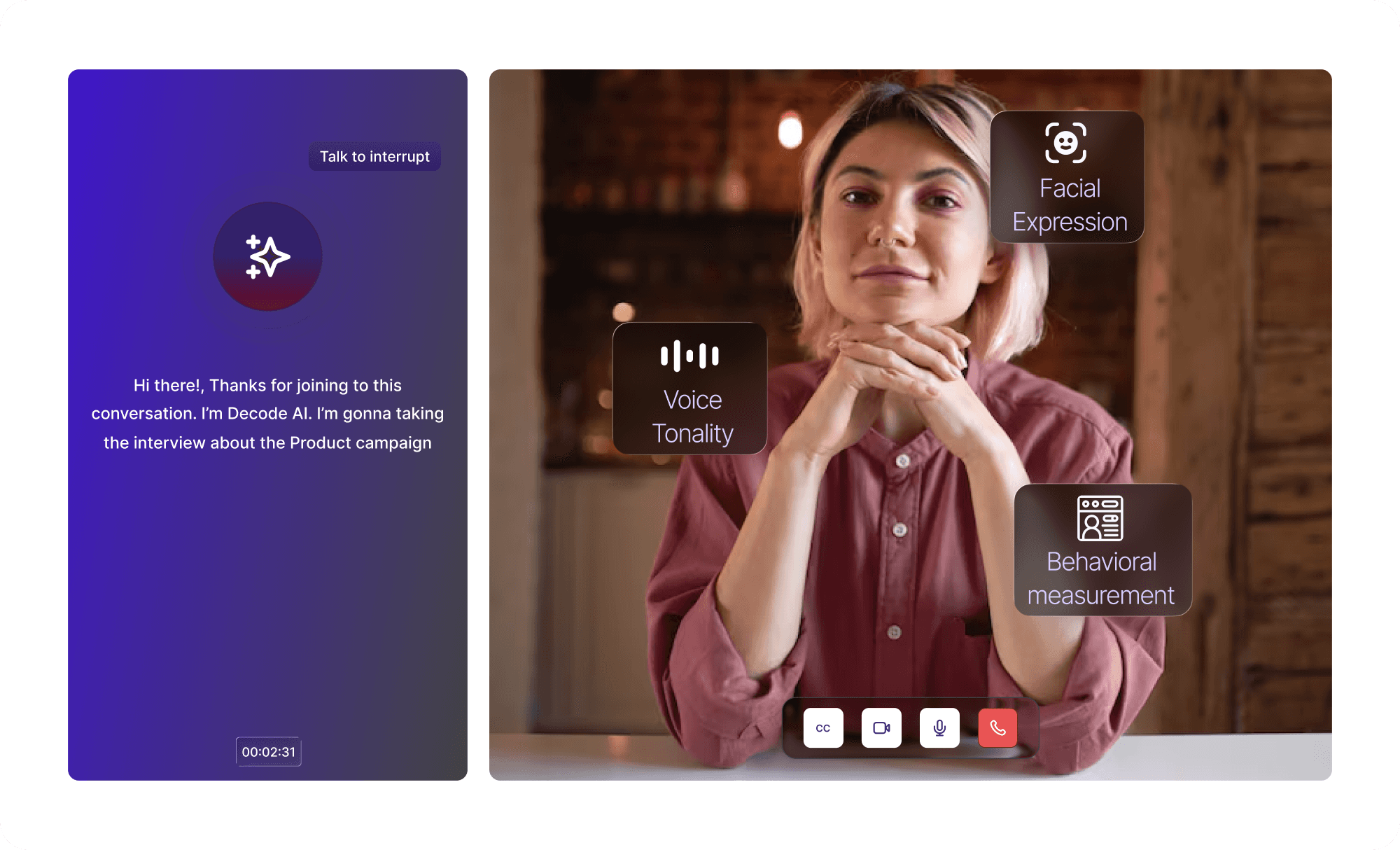

How Decode by Entropik Helps

If these workflow signs feel familiar, Decode AI Moderator can help teams make qualitative research more scalable and easier to manage.

Decode helps teams:

run interviews at scale

use adaptive questioning based on participant responses

support multilingual research across markets

capture emotional and behavioral signals alongside open-ended feedback

reduce manual effort across the research workflow

turn responses into faster insight through real-time insight generation

That makes Decode AI Moderator useful for teams that are not just trying to collect more interviews, but trying to build a more practical and sustainable qualitative workflow.

Final Thoughts

Not every team needs AI Moderator immediately.

The better question is whether your current workflow is starting to show signs of strain. If speed, scale, consistency, and operational effort are becoming bigger problems, it may be time to evaluate whether a different approach fits better.

That is where AI moderated interviews can become useful.

The strongest teams will not choose AI moderation just because it is new. They will choose it when it clearly solves the kind of research workflow problem they actually have.