Tag

Technology

Date

Read Time

7 Minutes

Content

Entropik Team

Why Early-Stage Concept Testing Often Feels Harder Than It Should

Early-stage ideas need feedback before teams invest too much time, budget, or internal alignment into them. That is what makes concept testing so important. It helps teams understand whether a concept feels clear, relevant, differentiated, or worth pursuing before it moves further down the pipeline.

But early-stage feedback is often harder to get right than teams expect. Surveys can be fast, but they may only capture surface-level reactions. Traditional interviews can bring depth, but they also take more time to schedule, moderate, and synthesize. Teams can end up choosing between speed and richness when they really need both.

This is where AI moderated interviews can become useful in concept testing. They give teams a way to gather richer early-stage feedback without building a fully manual interview workflow for every round of exploration. That can make concept testing more practical, especially when teams need directional input quickly.

Why Early-Stage Concept Testing Is Often Harder Than It Looks

At first glance, concept testing can seem simple. Put an idea in front of people, ask what they think, and use the feedback to decide what to do next.

In practice, it is rarely that straightforward.

Good concept testing research is not only about whether people say they like an idea. It is about understanding how they interpret it, what feels unclear, what creates interest, what creates hesitation, and what might stop the concept from working in the real world.

Teams often need to learn:

whether the concept is immediately understandable

whether the value feels relevant

what part of the idea stands out most

what feels confusing, weak, or unconvincing

whether the concept is strong enough to explore further

That kind of feedback is more nuanced than simple preference testing. It often needs probing, clarification, and open-ended exploration to become useful.

Where Traditional Concept Testing Methods Break Down

Many common concept testing methods are useful, but they do not always work well for every stage of idea development.

Closed-ended testing can be too shallow

A survey can tell you whether a concept scores well on appeal or relevance, but it may not tell you why. In early-stage idea testing, that can be a real limitation. A concept may get weak scores without revealing what exactly is missing, or it may get decent scores while still carrying confusion that becomes a problem later.

Manual interviews can be too heavy

At the other extreme, live moderated interviews can provide stronger depth, but they also take time and resources. That makes them harder to use when teams need fast concept feedback across more participants or multiple rounds of exploration.

Probing is often inconsistent

In early-stage concept evaluation, follow-up questions matter. But when interviews are run manually, probing can vary across moderators and sessions. That can make it harder to compare responses consistently.

Operational effort slows learning

When a team is testing multiple ideas, messages, or concept directions, the process can quickly become slow. Recruiting, moderation, and analysis all add time. That can delay decisions at exactly the stage where teams need to learn quickly and refine fast.

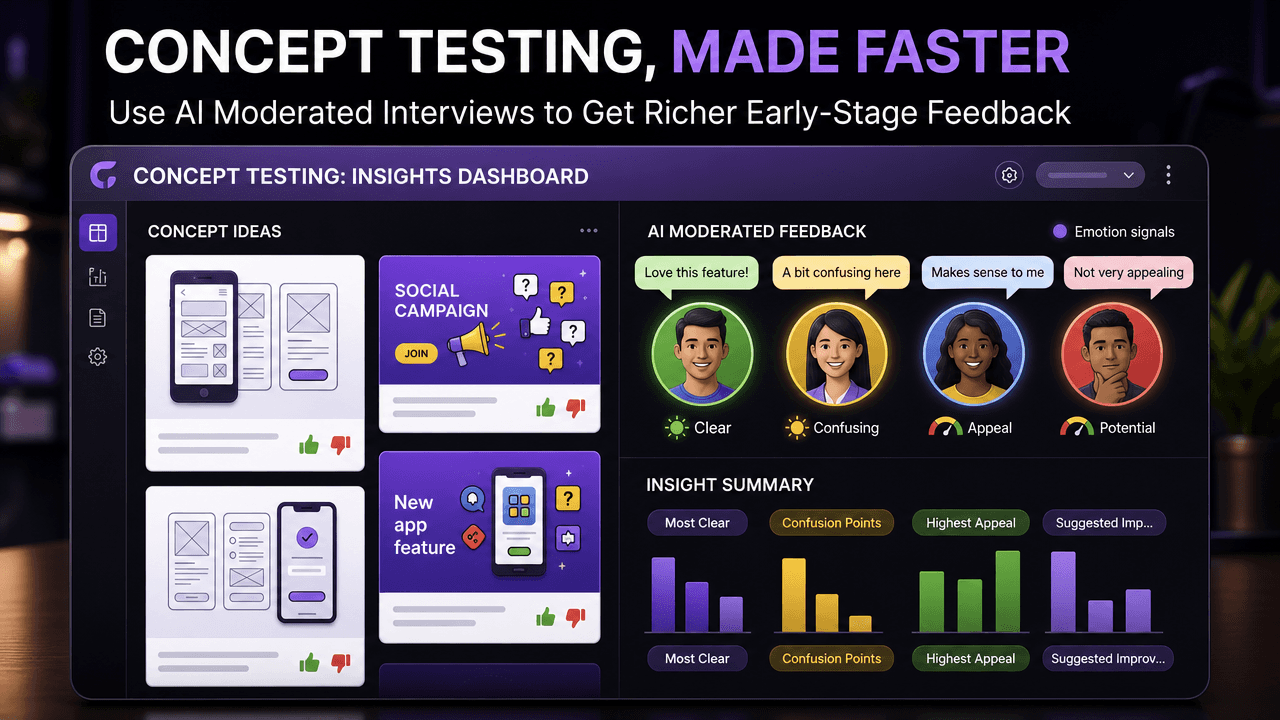

How AI Moderated Interviews Help in Concept Testing

This is where AI moderated interviews can be especially useful. They do not replace strong research design, but they can make product concept testing more scalable and easier to run.

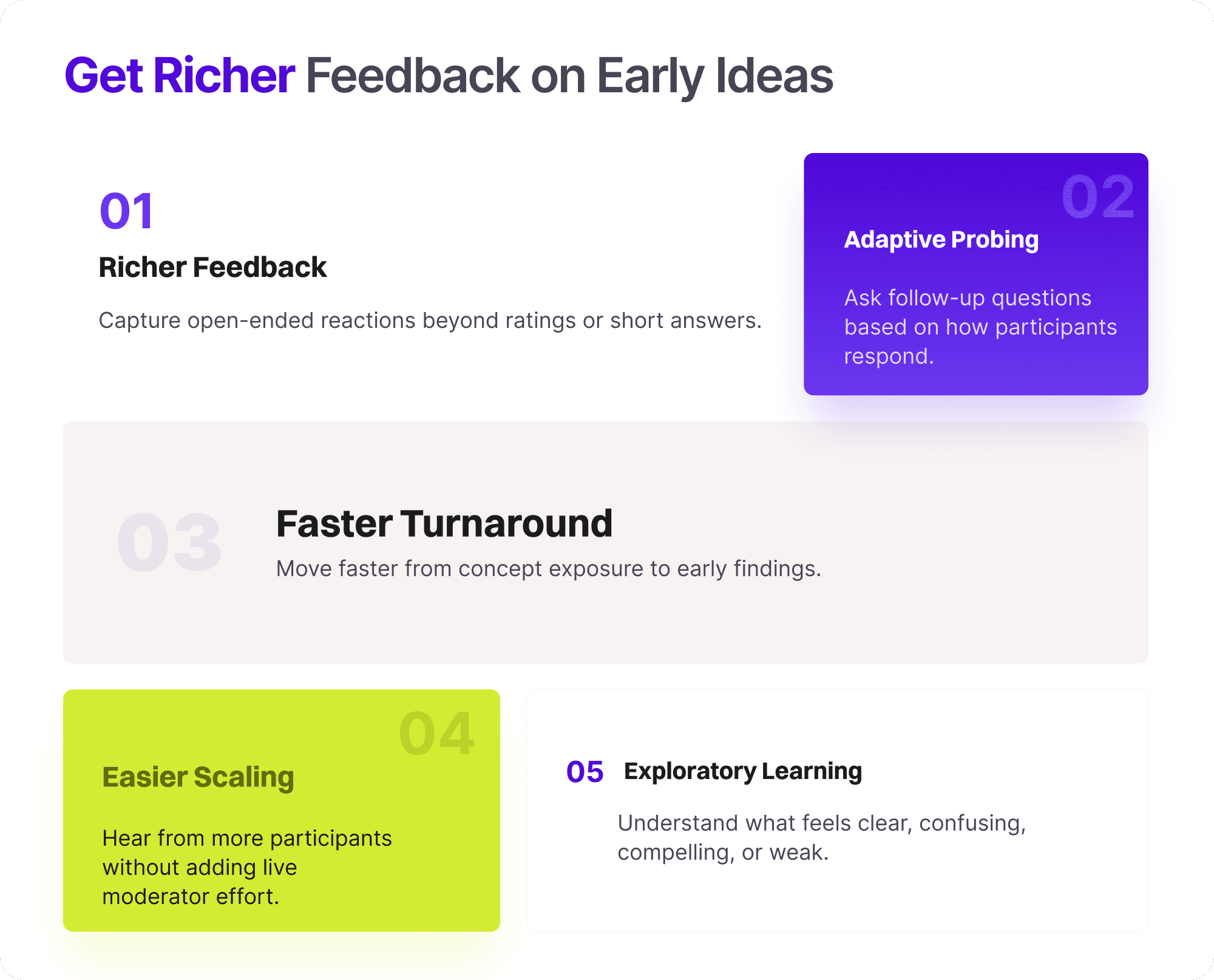

Richer early-stage feedback than surveys alone

Instead of only collecting ratings or short written responses, AI moderated interviews can help teams gather more open-ended reactions to a concept. That makes it easier to understand confusion, curiosity, hesitation, and appeal in a more useful way.

Adaptive probing

A concept often needs follow-up questions to reveal what participants actually mean. AI moderation can help by adapting questions based on how participants respond, which makes concept feedback more informative than a static questionnaire.

Faster turnaround

When teams need directional input quickly, waiting on a fully manual interview process can slow down decisions. AI moderation can help teams move faster from concept exposure to early reactions and findings.

Easier scaling across participants

Concept testing often benefits from hearing from more than a handful of people, especially when teams want to compare reactions across segments. AI moderation can make that easier without requiring the same level of live moderator effort for every session.

Better support for exploratory reactions

At the early stage, teams often do not need a final answer. They need directional learning. AI-moderated interviews can help uncover what people understand, what they miss, what feels compelling, and what needs work before the concept moves forward.

What AI Still Does Not Solve on Its Own

AI can make concept testing more practical, but it does not solve every part of the problem on its own.

Strong concept framing still matters

If the concept itself is poorly written, unclear, or overloaded, better moderation will not fix that. Teams still need to define what they are testing and how they present it.

Question design still matters

The quality of feedback depends heavily on the quality of the questions. Good idea validation still requires thoughtful prompts, careful structure, and clarity about what the team is trying to learn.

Researcher interpretation still matters

Even when AI helps collect and structure responses, researchers still need to decide what matters, what patterns are meaningful, and what the business should do next.

Final judgment still needs context

A concept that gets positive responses may still be wrong for the market, the brand, or the product strategy. AI can support faster feedback, but it does not replace strategic judgment.

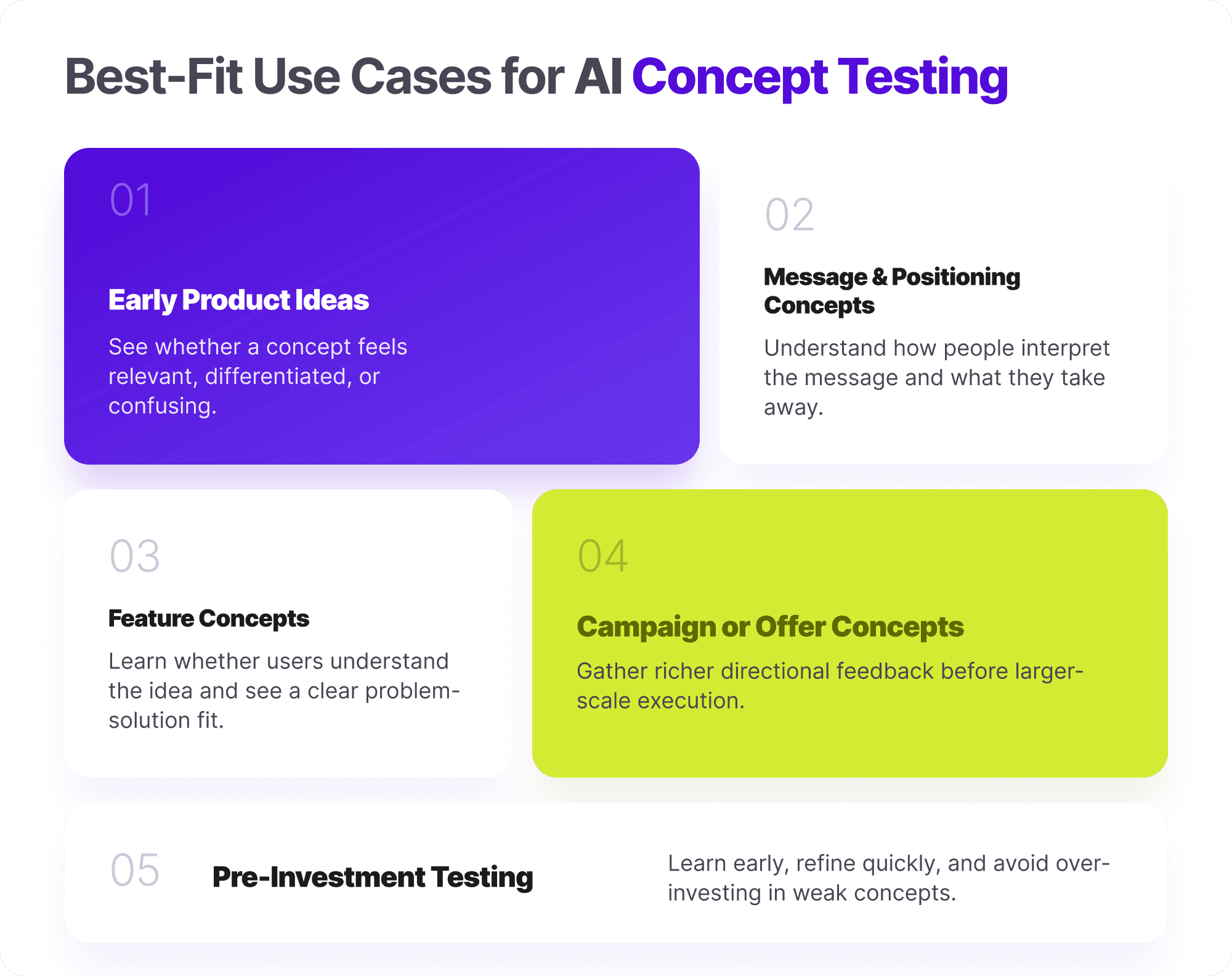

When This Approach Makes the Most Sense

There are several cases where AI-moderated concept testing can be especially useful.

Early product ideas

When teams want to understand whether a product concept feels relevant, differentiated, or confusing before deeper investment, AI moderation can help gather early reactions quickly.

Message or positioning concepts

Positioning ideas often need more than a top-box score. Teams need to hear how people interpret the message, what they take away from it, and where it breaks.

Feature concepts

When testing new features, teams often want to know not just whether users like the idea, but whether they understand it, whether it solves a clear problem, and what concerns come up first.

Campaign or offer concepts

Teams evaluating campaign directions, offers, or creative ideas can use AI-moderated interviews to get richer directional feedback before moving into larger-scale execution.

Directional testing before bigger investment

In general, this approach works best when the goal is to learn early, refine quickly, and avoid overinvesting in weak concepts. That is where concept testing becomes especially valuable.

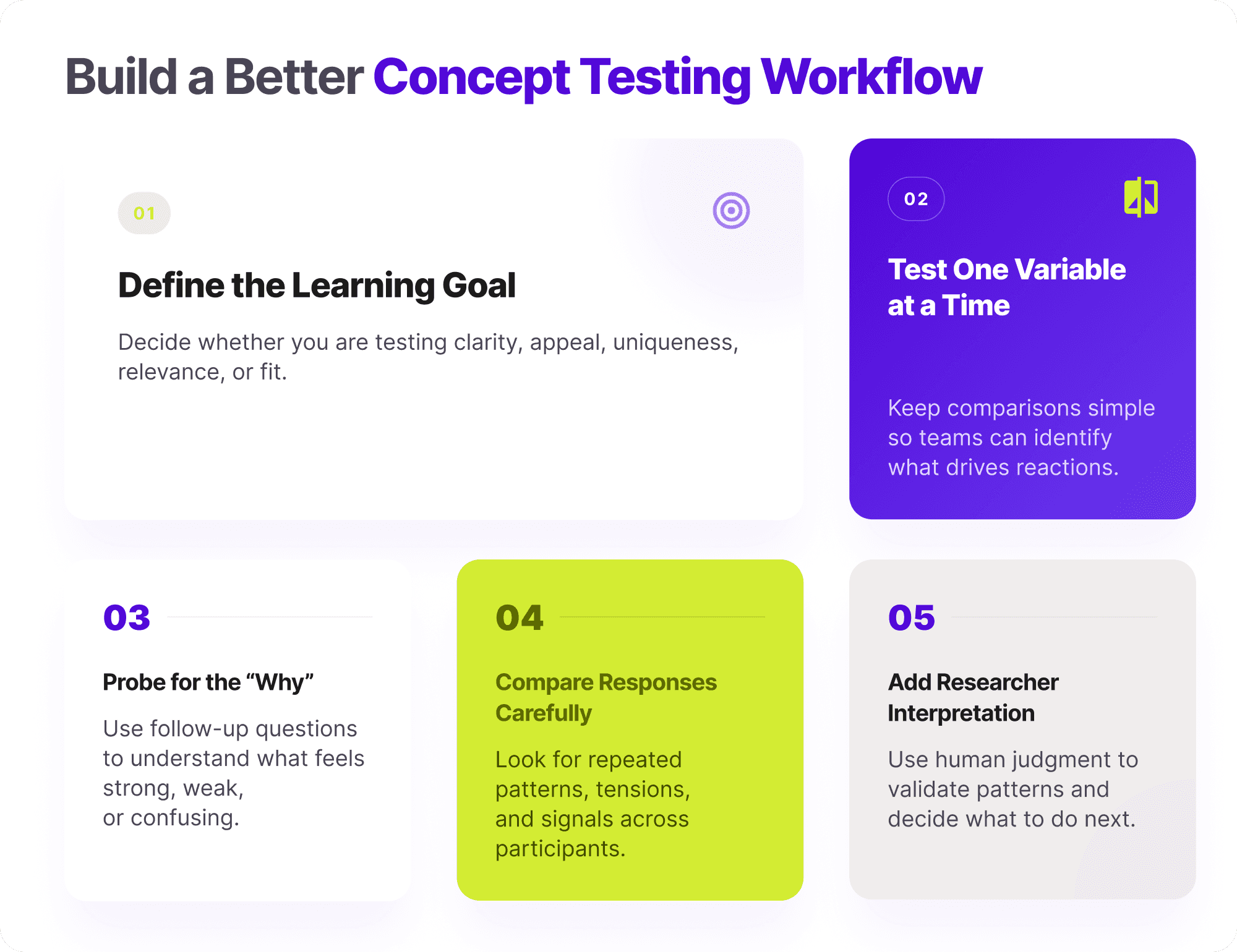

Best Practices for Running Concept Testing With AI Moderated Interviews

Teams usually get better results when they use AI moderation as part of a thoughtful testing workflow rather than treating it as a shortcut.

Define what you need to learn first

Before testing begins, be clear about what the concept needs to prove. Are you testing clarity, appeal, uniqueness, relevance, or likely fit? The more specific the learning goal, the more useful the feedback becomes.

Test one variable at a time where possible

If too many elements change at once, it becomes harder to understand what is driving reactions. Simpler comparisons usually lead to better insight.

Use probing to understand why reactions happen

A concept score alone rarely tells the full story. Strong probing helps teams understand why a concept feels strong, weak, confusing, or compelling.

Compare responses carefully

When teams hear from multiple participants, the goal is not just to collect more comments. It is to identify repeated patterns, important tensions, and signals that can shape the next iteration.

Combine AI speed with researcher interpretation

AI can make the workflow faster and easier to scale, but human researchers still need to interpret patterns, challenge weak conclusions, and decide what to do next.

How Decode by Entropik Helps

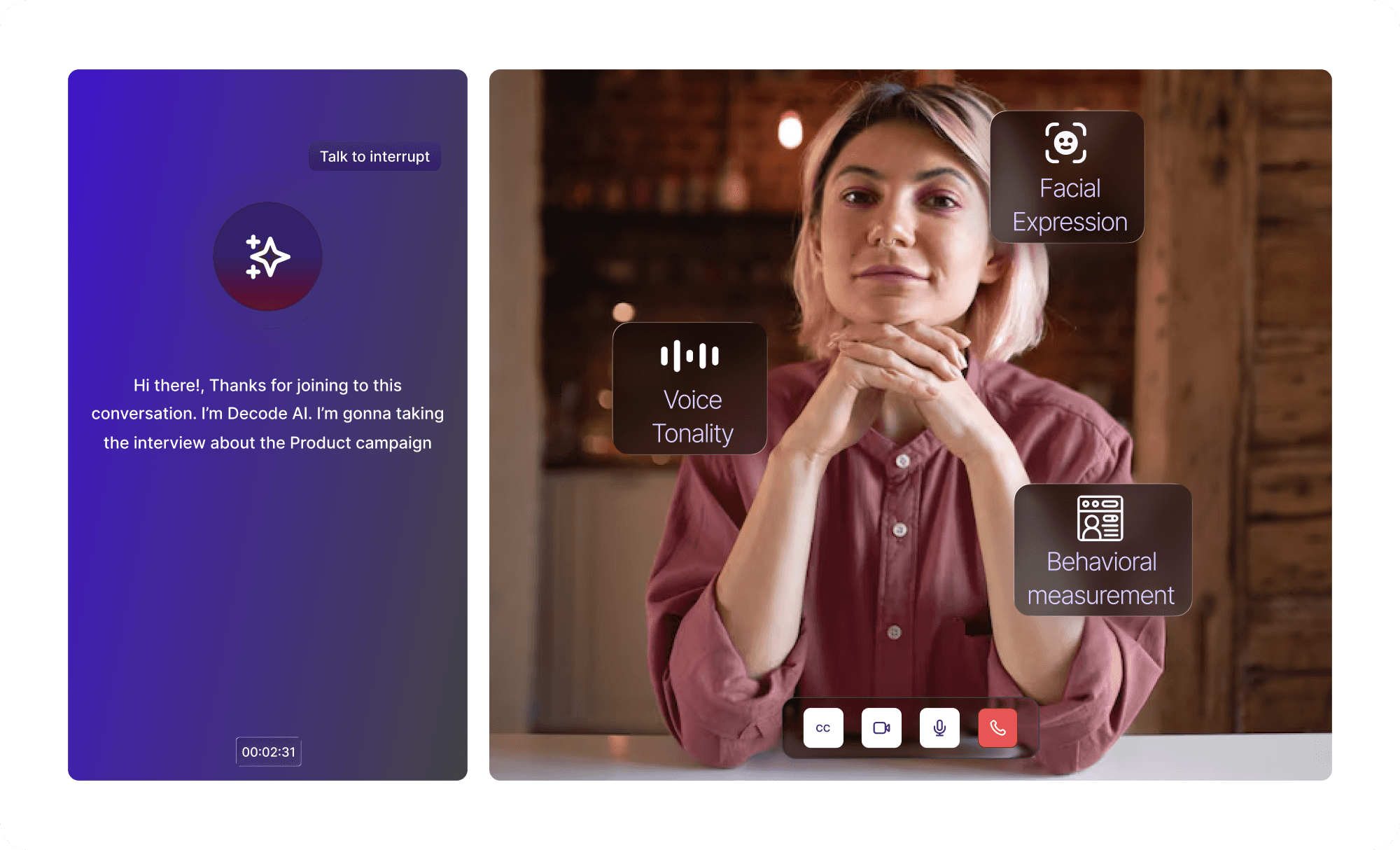

For teams running concept testing, Decode AI Moderator can make early-stage feedback more scalable and easier to manage.

With Decode AI Moderator, teams can:

run concept interviews at scale

use adaptive questioning based on participant responses

capture emotional and behavioral signals alongside verbal feedback

reduce inconsistency by applying structured, bias-free moderation across sessions

let participants complete interviews anytime, anywhere

get preliminary insights as interviews complete

support response analysis and reporting across concept interviews

That makes Decode AI Moderator useful for teams that want more than surface-level concept feedback, but do not want every round of testing to depend on a fully manual interview workflow.

Final Thoughts

Early-stage concept testing needs more than surface reactions. Teams often need to understand what people actually mean, what creates interest, what creates confusion, and what should change before the concept moves forward.

That is where AI moderated interviews can help.

They can make concept testing faster, more practical, and better suited to early-stage exploration. But strong design, good questions, and thoughtful interpretation still matter.

The teams that do this well will not just test more concepts. They will make better decisions about which ideas deserve to move forward.